Chat GPT4 aces the LSAT.

19 Tuesday Aug 2025

Posted in Uncategorized

19 Tuesday Aug 2025

Posted in Uncategorized

12 Tuesday Aug 2025

Posted in Uncategorized

Pumpkin Person rating: 9/10

Director: Ali Abbasi, Writer: Gabriel Sherman Budget: $16 million Box office: $12 million

I’ve been wanting to see this movie for a long time but apparently all the movie theatres feared law suits because the few theaters that would show it would only do so on weekday afternoons when I was working. This is not surprising given that a Trump spokesperson called the film “garbage” and “pure malicious defamation, should not see the light of day, and doesn’t even deserve a place in the straight-to-DVD section of a bargain bin at a soon-to-be-closed discount movie store. It belongs in a dumpster fire.”

According to Trump spokesperson Steven Cheung “We will be filing a lawsuit to address the blatantly false assertions from these pretend filmmakers…This garbage is pure fiction which sensationalizes lies that have been long debunked. As with the illegal Biden Trials, this is election interference by Hollywood elites, who know that President Trump will retake the White House and beat their candidate of choice because nothing they have done has worked.”

Finally I saw the film from the comfort of my home which I prefer anyway. The film depicts Trump’s life from the mid 1970s to the mid 1980s. It starts when the twenty-something Trump is at a private club with a young woman. He notes that across the room from them is one of the only two billionaires in America and explains that becoming a billionaire takes a certain gene.

Notorious closeted gay lawyer lawyer Roy Cohn (played by Jeremy Strong from HBO’s must-see show Succession) invites the awkward young man (but not his date) to his table to eat with an Italian mobster and other power brokers. Cohn feels sorry for the goofy, oafish yet handsome young Trump, takes him under his wing and teaches him how to be a winner (though the subtext is Cohn has a crush on him) much to the jealousy of Cohn’s other blond young friend who seems to feel the even younger Trump is being groomed as his replacement.

Many of the techniques that caused Scott Adams types to view Trump as a political chess master, were, according to this film, actually taught to him by Roy Cohn who is portrayed as the evil genius mastermind behind Trump’s success. More evidence for Ashkenazi high IQ.

Among the rules Cohn taught his young apprentice:

But in the end there is no victory for Cohn who finds himself brutally discarded once Trump no longer needs him.

I thought the film was clever in not trying to tell Trump’s entire life story, but focusing on a specific period. His initial rise from the outsider from Queens to the King of New York. No one personifies that city during the 1980s quite like Trump, and the film is peppered with nostalgic tunes like The Pet shop Boys’ Always on My Mind and Baccara’s haunting Yes sir I can boogie to depict his ill-fated romance with first wife Ivana who is portrayed as stunningly beautiful in her youth.

But perhaps the true star of the film is the city of New York itself, with its massive skyscrapers, bright lights and all around vibrancy, seen from the panoramic view from high up in Trump tower.

21 Monday Jul 2025

Posted in Uncategorized

For years HBDers have been citing twin studies to argue that IQ is up to 80% heritable. The logic seemed straightforward enough: Take pairs of identical twins separated at birth (or shortly there after) raise them in different homes, and then give them an IQ test. When this was done in the famous Minnesota Twin study, the correlation was 0.75, suggesting 75% of the variance in IQ is caused by genes. Taking the square root of the correlation suggests an astonishing 0.87 correlation between IQ and genes, and that 87% of the individual differences in American IQs are caused by genes.

Critics argue that the twins were not raised sufficiently apart to warrant such strong conclusions. Some like commenter RR argue that the very idea of inferring causation is absurd because genes and IQ interact. For example good genes cause good environments and bad genes cause bad environments so even if identical twins are perfectly separated into random environments at birth, the fact that they both have the same genes means they’ll both end up in similar environments by age 40, so does it really make sense to credit the genes for their IQ similarity when the genetic effect was mediated by environment?

My answer has always been “yes”, because even if environment were what directly caused someone to have a high or low IQ, it was their genes that made them smart or dumb enough to end up in a good or bad environment in the first place, relative to the one they started in, so genes were the ultimate cause of the IQ.

But then I did a thought experiment: Imagine if in the future they develop really good gene editing technology and the richer your parents, the better the gene editing is. Now imagine if we did studies of identical twins raised apart in this society. We might find heritability drops to only 0% because when your identical twin is raised from birth in a much richer home, his adoptive parents can afford the best gene editing software money can buy, causing his intelligence to be twice yours.

Even if the IQ gaps between identical twins raised apart in such a society were 100% caused by genetic editing, twin studies would show them to be 100% caused by environment because the IQ correlation between identical twins raised apart in that society might be zero.

Even though it’s technically true that their environment caused their IQ differences since environment mediated access to gene editing, it would feel somehow wrong to say genes had no effect on their IQ, when literally 100% of the IQ differences were caused by gene editing.

So what I learned from this thought experiment is that heritability does not partition the variance into genes vs environment, but rather it partitions it into genes you were born with vs environment you were born with. Only in organisms that have very little ability to change their environment (like plants or to some degree people living in strict caste systems like 1940s India) , do twin studies capture the full spirit of heritability.

06 Sunday Jul 2025

Posted in Uncategorized

[The following is a guest post by Ganzir and does not necessarily reflect the views of Pumpkin Person]

Source from where I got Atlas Shrugged and all quotations from it in this article: https://archive.org/details/in.ernet.dli.2015.458873

“When you work in a modern factory, you are paid, not only for your labor, but for all the productive genius which has made that factory possible: for the work of the industrialist who built it, for the work of the investor who saved the money to risk on the untried and the new, for the work of the engineer who designed the machines of which you are pushing the levers, for the work of the inventor who created the product which you spend your time on making, for the work of the scientist who discovered the laws that went into the making of that product, for the work of the philosopher who taught men how to think and whom you spend your time denouncing.”

“The machine, the frozen form of a living intelligence, is the power that expands the potential of your life by raising the productivity of your time. If you worked as a blacksmith in the mystics’ Middle Ages, the whole of your earning capacity would consist of an iron bar produced by your hands in days and days of effort. How many tons of rail do you produce per day if you work for Hank Rearden? Would you dare to claim that the size of your pay check was created solely by your physical labor and that those rails were the product of your muscles? The standard of living of that blacksmith is all that your muscles are worth: the rest is a gift from Hank Rearden.”

Ayn dear, if I were paid for all the productive genius which made that factory possible, I would need to spend a lot less time pushing levers and have a lot more time to spend denouncing you.

No, I would never dare to claim that the size of my paycheck was created solely by my physical labor, but neither should Hank Rearden claim that the size of his paycheck was created by any one person. Rand’s insight that infrastructure is a force multiplier is correct, but she was hardly the first person to think of it, although she might have been the first person to make the non-sequitur leap that this means capitalism good altruism bad. If you were digging a large hole by hand, and I gave you a shovel, you would become more productive because you could move dirt more quickly with the shovel than without it. However, you still had to put in work to move that dirt, so we both contributed to completing the job, which should be finished by throwing your copy of Atlas Shrugged into that hole and covering it back up. The upshot of these two paragraphs from John Galt’s raving is that, if I sit on my ass in the shade drinking lemonade while you dig that hole, then I dug the hole and you should thank me for the hard, hard work of sitting on my ass.

We all stand on the shoulders of giants, and also on the shoulders of dwarves. When I flip on a light switch, the light bulbs in that room would not turn on if there were nobody working at power plants. That should not be a surprise. The conveniences we tend to take for granted would vanish without people doing the work to keep things running behind the scenes. Rand’s mistake is thinking that the mega-capitalist keeps everything running behind the scenes. SpaceX would not exist if it could not draw on the innovations made by NASA, so Elon Musk is as indebted to the federal government as our rail-worker is to Hank Rearden, if not more so. Don’t you think Rand would object to that statement, truth be damned? If she were still alive, and she wanted to confront me in person, would her route to my house take her across the Interstate Highway System, which was sponsored by the federal government?

A gift from Hank Rearden? Notwithstanding that dubbing force multiplication a “gift” is uncharacteristically pro-altruistic coming from Aunty Ayn, this ignores the fact that Hank Rearden’s steel company (more like steal company) cannot function without the machines, scientists, and pushable levers that Rand thinks increase the wages of rank-and-file workers. Hank Rearden should thank them for his paycheck, not the other way around. Also note that, by equating the size of the laborer’s paycheck with how many tons of rails he makes each day, Rand implicitly claims that doing more work gets you paid more. I think we all know that, in real life, the correlation between how much work you do and how much you get paid is negative. Corporations tend to suffer from a condition, which might be called staff infection, wherein blue-suited meeting-attenders are remunerated an order of magnitude more than the blue-collared work-doers who, you know, actually do work.

How can Rand think that holding stock in a company implies that you had a share (pun intended) in all the work done by employees of that company? She even wrote, “the industrialist who built it”, as though Hank Rearden personally laid down every inch of track his company owns, possibly because she read a phrase like, “President Eisenhower built the Interstate Highway System”, and took it a teensy-weensy bit too literally. Yet even that pales in comparison to

lauding, “the investor who saved the money to risk on the untried and the new”, who must be sweating blood from the back-breaking work of throwing a fraction of their spare cash at a high-risk investment while the rest goes into Treasury bonds. I suppose Rand’s reconciliation would be that, in her fayntasy world, there exists a one-to-one correspondence between Ubermenschen and tycoons. Please cross-reference a list of Nobel Prize winners with a list of Fortune 500 CEOs and see for yourself how much they overlap. In fairness, the book’s copyright page does say, “Names, characters, places, and incidents either are the product of the author’s imagination or are used fictitiously…”. Rand’s most imaginative act when writing Atlas Shrugged was naming the book after how much it weighs.

01 Tuesday Jul 2025

Posted in Uncategorized

I was listening to an AI expert and he was explaining that the way they trained chat GPT to be so verbally intelligent, was to get it to predict the next word in every sentence ever written or scanned on to the internet. Whenever their predictions were wrong, they would adjust their algorithm to mathematically improve their predictive accuracy and after thousands of hours of brute trial and error, they were able to produce AIs that could score above 150 on some verbal IQ tests.

However intelligence is more than just verbal acumen; it’s the ability to adapt–to take whatever situation you’re in and turn it around to your advantage. Right now I am using my verbal skills to exploit social media, but through most of my day I relied on non-verbal skills like cooking dinner, navigating the remote control etc. Indeed over 99.999999999999999% of the universe doesn’t even have people of any other linguistic entity, so the true ability to adapt any situation is arguably skewed in a non-verbal direction. Indeed it’s even been argued that one reason men have larger brains than women despite having similar full-scale IQs, is that men have higher spatial IQ which requires more processing power, because as they say, a picture is worth a thousand words.

Another group with high spatial IQ is East Asians, and despite having the highest spatial IQs on Earth, they are remarkably humble about their intelligence. “We might be good at video games,” they will tell me, “but the Caucasoids, the whites, the Jews, the South Asians; they created great literature” which gives them a certain cachet in a World that worships the written words and has disdain for nerdy tech types.

However as AI continues to make progress in verbal ability, verbal ability will be seen as cheap and robotic. According to one of the grandfathers of the AI movement, the problem with creating a truly intelligent AI is even though they can increase verbal IQ by training AIs to predict the next word in any sentence, you can’t train AI to predict the next picture in any video. The reason is there are only 165,000 words in the English language, but the number of distinct images is seemingly infinite so no amount of machine learning can train them to predict it, because how do you assign useful probabilities to images that only occur once in infinity.

Once East Asians realize they are the only race that can’t have their genius automized by computers, they may start regarding non-East Asians as genetically inferior. Just as Caucasoids spent centuries calling tropical ancestry people by sub-human terms like “monkey”, East Asians might start dehumanizing Caucasoids as “chat bots”.

This may even lead to physical confrontations as foreshadowed by this scene from the Netflix series Kobra Kai in which an East Asian is rejected from a fraternity and doesn’t take it well:

13 Friday Jun 2025

Posted in Uncategorized

It’s pretty rare that Friday the 13th occurs in June (the birthday of Jason Voorhees) so it’s time to take a look at his sick and tragic life.

07 Saturday Jun 2025

Posted in Uncategorized

Tags

I have long regarded talk show hosts to be people of superior intelligence, and the great Phil Donahue was no exception. Perhaps no better example of this is when he verbally spared with Ayn Rand. Although Miss Rand was Jewish, and these tend to have branching neurons that give rise to certain genetic diseases like Tay Sachs, Donahue’s brain was twice as large as Miss Rands.

So it was a battle of brain size vs brain branching. This was in 1979, seven years before the even bigger brained Oprah would explode out of nowhere and replace Donahue as the #1 talk show host in America while replacing Miss Rand as the most influential woman on the planet.

Among Rand’s insights were:

Gates vs Musk: Who would Ayn Rand have admired more?

One of the prevailing themes of Ayn Rand’s books is that some men are just supermen who put the rest of us to shame by dominating our capitalist society. Although Bill Gates and Elon Musk are both very brilliant men, Gates is much more brilliant. If you go by their reported SAT scores, Musk is around 140. Gates’s IQ is either 170 or 154, depending on whether you believe he scored in the high or low 1500s on the old SAT (reports vary). Since both men became the richest person in the World in highly g loaded fields, it’s interesting to ask if there’s any reason to expect one genius to be so much smarter than the other.

Despite Gates being much smarter, Rand hated altruists so she might have despised the Gates foundation and applauded Musk’s demolition of USAID.

31 Saturday May 2025

Posted in Uncategorized

He’s an older Jewish man who was accused of rape after sexually inappropriate behavior.

She’s a young black woman who was accused of antisemtism after defending Palestinians in Gaza and indulging in conspiracy theories.

He was the King of movies. She is the Queen of new media.

Their unlikely friendship could itself have been one of the many great movies Weinstein produced. I’d call it, WHEN HARVEY MET CANDACE.

20 Sunday Apr 2025

Posted in Uncategorized

Using genetic data from Lee et al (2018), Davide Piffer has calculated polygenic scores for education/cognition for various populations around the World. Although these scores have very modest predictive power at the individual level, at the group level they correlate extremely well with IQ.

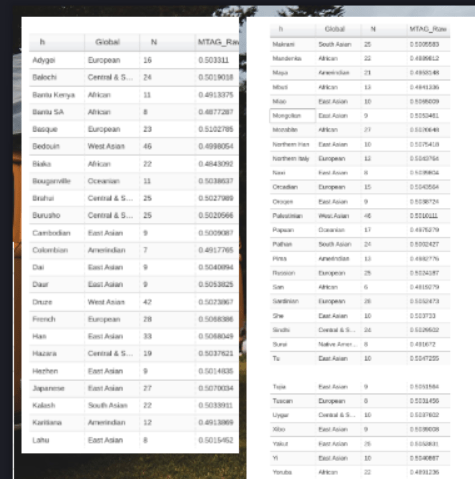

Below is a chart that reports these scores for 12 different “races” using two different polygenic scores (MTAG & PGS GWAS sig). In some cases the scores had to be inferred. For example the datasets did not have direct measures for Arctic people but because there was MTAG data on Mongolians and East Asians and because Mongolians are a hybrid of Arctic people and East Asians, their scores could be deduced.

The polygenic scores were converted to IQ equivalents by assigning the average European polygenic score an IQ of 99 (consistent with their psychometric scores) and assigning the average Bantu polygenic score an IQ of 80 (based on Richard Lynn’s 2006 estimate of how African Americans would average if they had no white admixture). From these two races, the IQs of all other races could be derived through linear extrapolation.

The most striking thing about the data is the clear correlation between genetic IQ and climate. All five of the top ranked races have light skin or lived in a cold climate during the ice age. Meanwhile the bottom four all have “Negroid” morphology, with the bottom three having never left sub-Saharan Africa. It is interesting that the bottom three never independently invented agriculture and the bottom two never made the neolithic transition at all, consistent with Richard Lynn’s theory that it was not until the last wurm glaciation about 20,000 years ago (which sub-Saharans did not endure) that humans became intelligent enough to take advantage of the wild grasses. By sadly killing everyone who was too dull to make fire, shelter and warm clothing, the last wurm glaciation probably raised North Eurasian IQ to near 90, and then only those with IQs of 93+ would make the leap to civilization. The exceptions are the Finns and the Arctic people who more or less reached triple digit IQ despite remaining essentially cavemen.

| Population | Average polygenic MTAG Raw score calculated by Davide Piffer derived from Lee et al. (2018) | PGS (GWAS sig) | Genetic IQ derived from polygenic MTAG | Genetic IQ derived from PGS (GWAS sig) | Average of the one or two genetic IQs |

Ashkenazi  | 50.038 | 106 | 106 | ||

East Asians | 0.505 | 49.75 | 99 | 104 | 102 |

Finnish | 49.456 | 101 | 101 | ||

Europeans | 0.505 | 49.167 | 99 | 99 | 99 |

Arctic people | 0.505 (estimated from the scores of Mongolians & East Asians) | 99 | 99 | ||

Southeast Asians | 0.501 | 93 | 93 | ||

South Asians & North Africans | 0.501 | 93 | 93 | ||

Native Americans | 0.494 | 48.6 (I subtracted 55% white & 15% black admixture since sample was Hispanic) | 83 | 94 | 89 |

Papuans | 0.498 | 89 | 89 | ||

Bantu | 0.492 | 46.83(I subtracted 25% white admixture since sample was Black Americans) | 80 | 80 | 80 |

pygmies | 0.484 | 68 | 68 | ||

Bushmen | 0.482 | 65 | 65 |

Note the PGS(Gwas sig) data is from Table 5 from the paper Evidence for Recent Polygenic Selection on Educational Attainment and Intelligence Inferred from Gwas Hits: A Replication of Previous Findings Using Recent Data. The MTAG raw data Piffer used is here:

22 Saturday Mar 2025

Posted in Uncategorized

Since commenter Mugabe keeps mentioning Bermuda as though this tiny island debunks over 100 years of HBD research, I thought it would be a good time to revisit the discussion Mugabe had on this topic with Lion of the Blogosphere. Original discussion can be found here. Note Mugabe is posting under the name “ron burgundy”: