Today all human populations have the genetic potential to average IQs in the 85 to 105 range, with a species mean of say 97 (Euro norms). Scientists claim living humans have an average cranial capacity of around 1350 cc, but I believe this is way off, because unlike our well nourished ancestors, most modern humans have been malnourished since the neolithic transition. Even today, virtually everyone outside the developed World has sub-optimum nutrition.

Perhaps the best estimate of living human brain size under First World conditions is the average brain size in the U.S., since the racial diversity of this country mirrors the species as a whole.

The average young American has a cranial capacity of at least 1418 cc, with a within-sex standard deviation of 91 cc. The average is probably closer to 1438 cc since the data comes from the army who tend to be smaller than their civilian counterparts.

The correlation between IQ and brain size (among members of the same sex and country) is anywhere between 0.25 and 0.4, with 0.32 being my current best guess (it changes all the time). This means that for every one standard deviation (91 cc) difference in brain size, IQ differs by 0.32 standard deviations on average (the IQ scale has a standard deviation of 15).

If we extrapolate this logic to chimps, who average cranial capacities of 400 cc, which is 1032 cc or 11.34 SD less than the average human (under First World conditions) we’d expect an IQ that is 0.32(11.34 SD) = 3.63 SD less than 97 (the human mean under well nourished conditions).

In other words, chimps should have an average IQ of 43.

And yet that’s not what we find. Last March I wrote:

In 2007 there was a fascinating study that compared human 2.5 year-olds to chimps and other apes on a battery of intelligence tests. With the exception of social intelligence, where the human toddlers were way ahead, the apes and toddlers had the same intelligence.

In other words, chimps have the same intelligence as a 2.5 year old (white) human.

What adult IQ does a mental age of 2.5 equate to? The question is a lot trickier than it seems. One could define adult mental age as 16+ and then use the age ratio method to conclude that since 2.5 is 16% of 16, a mental age of 2.5 equates to an adult IQ of 16. The problem with this method is it assumes intelligence develops as a function of age in a linear way, which is an oversimplification.

What is needed is an actual intelligence test that’s been given to both adults and to toddlers and one where scores increase on an interval scale.

One such test is digit span. Since the earliest days of intelligence testing (digit span has virtually no Flynn effect) it’s been known that by the age of three, a white child can repeat two digits, which probably means a 2.5 year old can repeat one digit.

By contrast U.S. adults average a forward digit span of 6.645 with a standard deviation of 1.35 and since races in the U.S. differ very little on forward digit span, this should be taken as the white adult distribution. This means that an adult who performs like a 2.5 year-old (digit span of 1) is 4.18 standard deviations below the white adult average.

If we assume that most cognitive abilities are like digit span, then chimps (who score like 2.5 years olds on most tested cognitive functions) perhaps average 4.18 standard deviations below white adults on the average test.

Does this mean their IQs average 4.18 SD below the average white adult? No, because if you score 4.18 SD below white adults on the average test, your composite score on a battery of tests is actually much lower. Why? Because it’s much more rare to average an extremely low score across a battery of tests than it is to score that low on any one test. Indeed based on the intercorrelation of WAIS-IV subtests, someone who is 4.18 SD below average on the average subtest, would be 5.73 standard deviations (86 IQ points) below average on the composite score, thus my best guess for the average IQ of chimps is 14 (white norms).

So contrary to what I’ve said in the past, the regression line predicting IQ from brain size in humans, overpredicts chimp IQ by 29 points!

Their expected IQ is 43, but their actual IQ is 14.

This is because apes are not just small brained humans anymore than humans are big-brained apes. No matter how big a chimp’s brain gets, it will never be organized as efficiently as a human’s.

This is because the human brain is spherical which as commenter pumpkinhead noted, is a uniquely efficient shape because it minimizes the distance between any two points, thus maximizing communication between neurons.

This explains why Homo heidelbergensis, (600 ka to 300 ka) despite having a relatively large brain of 1280 cc, was virtually incapable of innovation. In their book The Rise of Homo sapiens: The evolution of Modern Thinking Frederick L. Coolidge and Thomas Wynn write:

…nothing much changed in Africa and Europe between 1.4 million years ago and 300,000 years ago. Hominins made the same types of stone tools they always had-hand axes and cleavers and a range of flake tools…

So despite having a near-human sized brain, Homo heidelbergensis was still an ape in my opinion, and so his expected IQ would fall on the ape regression line, not the human one, and thus his IQ was 29 points lower than an equally big brained human.

And similarly for Neanderthals.

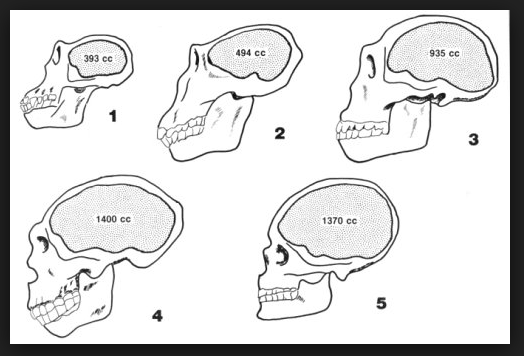

Source for image: https://answersingenesis.org/human-evolution/cranial-capacity-and-endocranial-casts/

It was not until 300 ka that incipient forms of H. sapiens appear and only then do we start to see a gradual transformation from ape shaped brains to the globular brains of fully modern humans, paralleled by a few isolated signs of human culture. It’s not until 100 ka to 35 ka is the brain transformation is complete and we get the upper paleolithic revolution, the neolithic transition and the ability to finally leave Africa and rapidly colonize the whole world and beyond.

The simplest explanation for this is that IQ genetically increased 29 points over this period, as we leaped from ape shaped brains to human shaped ones.

Thanks for these interesting calculations. How about doing them with digits backwards?

http://www.unz.com/jthompson/digit-span-modest-little-bombshell/

The only problem with digits backwards is it’s too difficult for 2.5-year-olds, & thus can’t be used to compare their intelligence to adult levels. And that’s too bad since it’s twice as g loaded as digits forward as you know.

If you could find the center of the Brian (the middle in each three dimensions) and then estimate the avarage deviation from this center and normalize it compared to the chimps and humans we might get into frenology. It would be preferable to do this on humans and ignore the chimps though, becuase chimps dont manage to get the same environments as humans.

If this factor is significant, people around latitude 27-47 might be smarter as their skulls are more round.

Foward digits arent that G-loaded.

“It would be preferable to do this on humans and ignore chimps though, becuase chimps dont manage to get the same environments as humans.”

Shots fired against Pill.

”If you could find the center of the Brian”

I do

Maybe jews compensate their smaller brain size by having it more spherical 🙂

I have found in Intelligence this interesting article about a french study done on 37K kids from varied backgrounds.

1) – They found that kids 11yo with +2sd in IQ were (4 years later) +1sd above the mean in terms of knowledge testing (national exam given to 15 yo kids).

2) – They also found that money, diplomate, type of school had 0 effects on grades for them.

3) – But the most interesting thing is that they said the test was skewed at -0.3 sd. They checked it was not linked to pupils background. It was a non verbal test with “cards” series. Its less spatial than Raven. It has a symbolic aspect in it. So only 0.55% had a 130 IQ level (2.5%). So they decided to take the top 2% and got a 126 IQ threshold. They never hypothesize that the “french” kids average IQ is 95.5 Wich is certainly the real IQ. And it is a big independent confirmation of the 96 IQ finding of Woodley of Menie.

NB : all children were independently tested. Its costed 180€ per children to have a psychologists give the kids the proper instruction. And they were tested individually. Thats why the data are extremely precious.

They negotiated the price to 30€ a piece because of the volume (the budget was 1M for the study).

Brain surface area correlates the most with spatial IQ, not verbal. Verbal IQ is a matter of interconnectedness.

Source?

Serious question: I’ve had moderate depression for about 4 years and most online resources say attending therapy is necessary for treating moderate and severe cases. Should I take their advice and find a therapist, or am I capable of treating myself?

I’m 19 and have had 2 unsuccessful stints with therapists. One of them was nearly a year long, and neither of them improved things. I live alone and don’t have any close friends.

Moderate depression is linked to anxiety and neuroticism. Major depression to schizophrenia and bipolar disorder. First, you should try to understand to wich pattern you belong.

If you got your dna studied, you could get a polygenic score to see where you stand on those compared to general population. Imagine you discover you have more anxiety and mood problems than 95% of the people.

It could help yourself reasoned what you are feeling and why and then control it thanks to your reasoning it.

I would never dream to pay someone for talking to him. I would feel it’s like paying for talking to a pet. Buts that’s my French arrogance …

First of all you need reduce your levels of internalized guilt. Most people who become depressed is because toxic levels of self-blaming. They think all their problems is their own fault.

Secondly you need try to understand why you no have any close friends, because maybe it was .. your fault, but absolutely resolvable or important to be recognized by yourself. If you live in an environment where most people of your age is different than you on personality/interests; if you’re trying to be friends with these people [a wrong way to search for friendship]; if you no have any control to fight back or defend yourself against harmful behavior, verbal primarily; if you have a eroded self-esteem and how to build it again…

Thanks Bruno and Oprah! And I really mean it.

Another advise

always be funny or try to be funny!! Ridicule the life is often revigorant.

The only thing that matters is that life today is built on the shoulders of giants. We have progressed so far. No other species on this fucking planet even compares. Humans have domesticated, created, constructed, done everything. WE ARE SUPREME!

And it’s all due to our altruism. Our empathy is what differentiates us, not our intelligence alone. we needed empathy to truly understand one another and look out for each other and build a world that was better for us all. this is the genius’ legacy. his altruism is the beauty for which this world was built.

genius shall and will be rewarded for the many benefit from the few.

Empathy is not ”just” affective but also cognitive. Our capacity to project our perception in another perspectives and memorize their characteristics.

Yes but do you include in this « cognitive aspect » the strictly semantic one : the propositional content of what whe think excluding memories or imagery. Can someone have empathy with strictly semantic consideration ? My guess is yes but I am not sure, because I lack the inner experience of most non-semantic context.

Empathy starts with sensorial approach. Empathy is to put in another perspective, of course, figuratively speaking. When we can detect the features of an rose …

Empathy is not about imagination, it’s about reasoning, first of all. Do you can think about your actions on another people or about another elements of reality*

we are so complex that memes like “the brain came up with the word brain” are real. the complexity of one human mind is probably so magnificently incredible that it can create infinite universes. that is what we should be grateful about. we are living, breathing, progressing. with every second that passes in this universe, so does our life. it is a beautiful thing. let’s enjoy it.

So we should just ignore the fact that people are potentially getting more stupid?

David, I think it kind of depends on if you believe in a divine existence or that the Universe is sentient and all. If those two things are true, then we can come to the conclusion that every step towards the end of time will lead to greater and greater knowledge, experience, etc. because we have already progressed further than anything that lived in the past, in a chronological sense. So if time moves forward, that must mean that the existence that moves with it will also be greater than that of the past. I hope that makes sense. What I’m comparing here is that the past is less excellent than the future in every way. Even if everything else was to be subjectively judged as going south, we could come up with the conclusion that it was meant to be and a part of time moving forward. Maybe I’m like Pangloss from Voltaire’s “Candide,” but it is important to recognize that everything that happens is good and everything that doesn’t happen is bad. At least that’s the philosophy I subscribe to.

video game performance is what you want to use here.

i’ve thought about this a lot. noticed how i would play some game when i was a kid, then 15 years pass, think about it, go play it again, and easily get past places where i got stuck as a kid, get much further in the game on the first play thru as an adult, than i ever could playing it over and over as a kid.

plus, the games are scored. and the code for the game never changes. so it has direct comparison. like track and field, swimming, weight lifting.

it’s not that much different than when i took the old SAT when i was 12, then again in high school a couple times, and comparing those performances, except here, kids can play video games, but they can’t take seriously scholastic tests.

yes, comparing humans and chimps on g loaded chronometric tasks would be interesting, though I suspect many tasks that are g loaded in humans would overpredict ape intellect because of qualitative differences in intelligence.

Puppy should try playing a few games. We can add each othrr and make our massive brains compete through the medium of entertainment. Puppy would enjoy the lights.

https://www.theguardian.com/world/2019/jan/13/james-watson-scientist-honors-stripped-reprehensible-race-comments

Hahaha. I feel sorry for him. They asked him to repent his satanic views and he said ‘and yet it moves’ when asked if he felt sorry.

https://www.telegraph.co.uk/news/2018/10/07/old-people-struggle-survive-south-korea-becomes-aged-society/

oooh these amazing people from the far east… [on avg, on vga, on agv]

All this debate about IQ precipitated by that arab can be easily reduced or concluded by complete lack of IQ tests on creativity levels of people, what i’m saying in HBD-sphere since a decade ago.

IQ simply don’t measure, even touch, on the most important component of human intelligence after self-awareness, creativity. Thanks or not so for creativity, we live in the world absolutely populated by creative inventions, even our language, even ourselves.

IQ measure our capacity to memorize things, specially about literacy and numeracy. If we look to much for this fundamental but not absolute skills and forget creative/reasoning skills obviously we will miss creativity and find a not high correlation with IQ.

It’s not wrong to say that people with great and accurate vocabularies or mathematical facilities are smarter for both, duhh.. But not that they are absolutely smarter.

Intelligence, contrary to nerd thinking, is not just about intellectual behavior [often emphasised on technical: learning, memorization, restoration, application] but about EVERYTHING we do, all our behaviors, obviously including our reproductive behavior, for those who have this inclination. Overly embodied by civilization make our perception forget that intelligence is a basic or fundamental behavior which encopass all our behaviors.

In the same way schizophrenia is abranded when the individual affected have a higher IQ-intelligence than when he have a lower one, because more intellectual resources and more self-control to deal with it… it’s expected that higher IQ-intelligence facilitates higher creativity, but what most define creativity by its expression, is specialization. All discoveries happened when a individual become so fascinated by a little range of things or knowledge, so deep on this, looking in absolute unbiased ways to details nobody saw. When you truly look so deep to given specific things you can perceive connections with another things, before unnoticed.

A lot of highly creative people are super-specialist and may not score higher in traditionally generalist IQ tests. There are a lot of diversity among sub-cognitive traits as well on sub-noncognitive traits.

For sure, conclude IQ is a pseudo-science is quite stupid. IQ is a incomplete science, maybe more incomplete than others.

No, I prefer the narrowest definition of only logical thinking. The wider definition – equating g with success aptitude as a member of the human species or even among all species – exposes to inconsistencies and Talebs or RR critics. Power to deduce or induce validly would do the job for me. And the power of g is that it’s a very useful aptitude.

But intelligence is more than just g. When Binet created the first psychometric intelligence test he did so without considering g so IQ tests would still exist even if g were never discovered. g simply makes it easier to measure intelligence because it makes each score more predictive of other scores, but even if there were no g factor, some would still be more cognitively gifted than others. Indeed certain cognitive impairments like fetal alcohol syndrome are uncorrelated with g, yet still very real deficits in intelligence.

The problem is that IQ, intelligence and G, are each used with several meanings, and I understand « g » as a statistical construct not to debate intelligence any more : the common factor of all mental tasks. Mental tasks being defined (in a circular way) as all activities that positively correlate with g.

Then I agree that there could be an intelligence without « g » and that in that case, this intelligence wouldn’t be « deductive or inductive valid reasoning aptitude ». But the fact is that there is always a « g » for all mental tasks. That’s why criticism of « g » or « IQ » related to real world value misses the point (in my opinion). Even if I know you like the predictive power of g, and would probably like to deduce « g » from people standing in different real life aspects.

What i would like to see are the concrete measurable consequences (for example for leaning abilities) of « g » to discover the real’ shape of the distribution.

The problem is that IQ, intelligence and G, are each used with several meanings, and I understand « g » as a statistical construct not to debate intelligence any more : the common factor of all mental tasks. Mental tasks being defined (in a circular way) as all activities that positively correlate with g.

I don’t think g is based on circular logic. If it were it would be pretty meaningless. There could be mental abilities that don’t correlate with g, or even correlate negatively with g, but no one has ever discovered them. Mental abilities are simply voluntary behaviors on which people can be ranked by a clear standard of proficiency and do not have strong correlations with any physical abilities. In theory factor analysis could have yielded no single g factor, but rather multiple independent factors like verbal, spatial, working memory; it just so happens that all the broad domains positively correlate since all the mental abilities them-selves do.

Then I agree that there could be an intelligence without « g » and that in that case, this intelligence wouldn’t be « deductive or inductive valid reasoning aptitude ». But the fact is that there is always a « g » for all mental tasks. That’s why criticism of « g » or « IQ » related to real world value misses the point (in my opinion). Even if I know you like the predictive power of g, and would probably like to deduce « g » from people standing in different real life aspects.

Not sure what you’re saying here.

What i would like to see are the concrete measurable consequences (for example for leaning abilities) of « g » to discover the real’ shape of the distribution.

The problem is g can’t be directly observed, only g loaded cognitive abilities can. Some of these, like working memory and vocabulary, seem to have normalish distributions. And yet equally g loaded spatial or mathematical tasks seem to have an explosive distribution where some people are thousands of times better than others.

”No, I prefer the narrowest definition of only logical thinking.”

Wich encompass anything’s behavioral…

”The wider definition – equating g with success aptitude as a member of the human species or even among all species – exposes to inconsistencies and Talebs or RR critics.”

Bruno,

I often don’t understand what you mean. Do you can quote part of my comment you disagree and put your counterargument*

IQ don’t measure heuristics, if it is, all religious people would have deductions of her scores.

…herr scores….

“No, I prefer the narrowest definition of only logical thinking. The wider definition – equating g with success aptitude as a member of the human species or even among all species – exposes to inconsistencies and Talebs or RR critics. ”

Actually RR’ critcism of IQ is in more conflict with your thesis than Santo’s(or whoever that person is).

It makes no sense to compartmentailze Intelligence as a singular module, If the entire nervous system is responsible for the mental coordination of the body.

Sasquatches are people too

What is your stance on climate change?

I just find it really weird how you can write about this stuff and also like watching womens daytime talkshow. Doesnt make any sense.

When I was a kid you had very high quality daytime talk shows like Phil Donahue (who many men admire) which led to me watching his #1 rival Oprah, and because of my fascination with race, I became very interested in Oprah, and then when I discovered her head size, the fascination increased given my interest in HBD. Also as a horror fan, I’m interested in freaks of nature & daytime talk shows were kind of like modern day freak shows in those days. So daytime talk shows from the late 80s to the 90s have nostalgic value for me.

In France, some channel likes to play back shows from the 80ies. One had something cold the play stud (play mec in « French »). It was basically a chippendale strip teasing at 16pm when the kids and non working mums were watching TV. Very weird times.

pill likes celebrity gossip & spray tan. That’s just as feminine as liking daytime talk shows.

Of course even the ads on puppys blog feature a black woman. Puppys promotion of black women is bordering on hidden figures level detangement.

@Partisangirl

9 de jan

We’re told to conclude from these maps, that highest IQ ppl are the ashkenazi jews, and we are told to accept that this is genetic and that they a race onto themselves. But this is no factual bases for this. High IQ or not, Ashkenazi jews are not a race, there are only 5 races.

HAHAHAHAHAHAHAHAHA

Im going to suggest they actually make a more realistic description like CNN subscriber or really politically correct person masquerading as someone whos not .

His gravatar and birthyear makes it humorous indeed…

RR gives no fucks whatsoever.

http://www.creativitypost.com/science/your_brain_knows_more_than_you_do

A DIFFERENT KIND OF SMARTS

(…)

Until recently, it was assumed that differences in cognitive ability were almost entirely due to analytic thought—the kind of thought measured by IQ tests. The idea was that people differ greatly in analytic ability; they don’t differ much in the kind of unconscious associative processes on which intuition is based. We now know that this view is wrong.

Psychologist Scott Barry Kaufman and his colleagues gave their participants a battery of cognitive tests, including both tests of analytical ability and tests of unconscious intuitive thought. Just as there are substantial differences among people in analytic intelligence, they also found substantial differences in intuitive ability. And these two types of cognitive ability weren’t strongly related to each other—just because you’re good at one doesn’t mean that you’re good at the other.

Intuitives—people who are high in intuitive ability and are close relatives of our Insightfuls—have a particular type of personality. They are impulsive thinkers who appear to shoot from the hip. This is because the intuitions that they rely on can often be quicker than the lumbering methodical thought of Analysts. Intuitives are also high in a personality trait known as “openness,” which means that they tend to focus on their experiences of patterns and feelings rather than on their analytic thoughts.

The fact that some people really are more intuitive than others goes a long way toward explaining why some trust their intuitions while others rely more on analytic thought. Most people generally have a sense of what they are good at. Not everyone has accurate self- knowledge, of course. People tend to be overconfident about their cognitive abilities, which is why students frequently wrap up a test feeling that they aced it when they, well, didn’t. But most people do seem to have some appreciation for their intellectual strengths and weaknesses. They go with what seems to work best for them, which, for Intuitives, are the greased associative pathways that allow ideas to ping one another in an unconscious chain reaction. (…)

>This is because the human brain is spherical which as commenter pumpkinhead noted, is a uniquely efficient shape because it minimizes the distance between any two points, thus maximizing communication between neurons.

This is bullshit. An inch or two of elongation doesn’t make any difference.

“This is because the human brain is spherical which as commenter pumpkinhead noted, is a uniquely efficient shape because it minimizes the distance between any two points, thus maximizing communication between neurons.

This explains why Homo heidelbergensis, (600 ka to 300 ka) despite having a relatively large brain of 1280 cc, was virtually incapable of innovation.”

May sound weird coming from me, but absolute brain size would probably have a higher affect on intelligence than shape. The only benefits i can see from shorter connections is faster processing speed, which really doesn’t affect actual capacity. I’ll look more into it. The final article you cite does suggest globularity was mostly due to rewiring, at first.

Humans have of course been innovating since before behavioral modernity, it’s just relatively small in comparison. According to the same study globularity became associated with facial changes around 130,000 years ago. This implies sexual selection had increased within this time. Couple this with the possibility that humans reached the tipping point to where their intelligence outmatches the difficulty of ecological pressures. It would select for individual variation within a tribe as well as a general culture that promoted unity because of increased social competition between tribes.

May sound weird coming from me, but absolute brain size would probably have a higher affect on intelligence than shape. The only benefits i can see from shorter connections is faster processing speed, which really doesn’t affect actual capacity.

I think it’s much more than speed, or maybe speed is more than just speed. If neurons can communicate more efficiently, it implies problem solving strategies themselves will be more efficient. If the best solution to a problem is located too far away in your brain, the problem may fade from working memory before the distant brain region gets the message.

Humans have of course been innovating since before behavioral modernity, it’s just relatively small in comparison.

Astronomically small in comparison. If you compare our technology 1.4 Ma to 300 ka, it would take an expert to notice any difference, but if you compare our technology 40 ka to now, even a toddler can see a HUGE difference.

According to the same study globularity became associated with facial changes around 130,000 years ago. This implies sexual selection had increased within this time.

Or it implies we figured out how to consume meat more efficiently (mastered the actual making of fire or better cutting tools) and thus no longer needed huge jaws. Or maybe it was self-domestication, or maybe selection for neuroplasticity selected for neoteny and thus baby faces.

Couple this with the possibility that humans reached the tipping point to where their intelligence outmatches the difficulty of ecological pressures. It would select for individual variation within a tribe as well as a general culture that promoted unity because of increased social competition between tribes.

Well ecological pressures would have increased once we left Africa since unlike neanderthals our bodies were only adapted to the warmth. Add to that the Toba supereruption which killed off virtually all of our food 75 ka and the ecological pressures to get smarter would have been extreme.

“or maybe speed is more than just speed. If the best solution to a problem is located too far away in your brain, the problem may fade from working memory before the distant brain region gets the message.”

Good point, but I’m not sure if speed would be as useful to novel problems. Working memory contributes to how we solve novel problems but I’m unsure of how powerful the correlation is to variation between the efficiency of glutamate distribution throughout the nervous system and processing speed.

“If you compare our technology 1.4 Ma to 300 ka, it would take an expert to notice any difference, but if you compare our technology 40 ka to now, even a toddler can see a HUGE difference.”

Well technology is not always the best indicator of intelligence. Most HBDers would assume that there is dysgenic trends in chronometric g(Though i’m not sure if I advocate this), despite the fact that our innovation rate is exponential compared to ancient humans.

Also, I’m more talking about the variation between and within tool-sets not necessarily the increased complexity.

“Or it implies we figured out how to consume meat more efficiently (mastered the actual making of fire or better cutting tools)”

Pretty sure that’s been refuted. Even before fire, we were able to eat rotten(but healthy) foo.

“maybe selection for neuroplasticity selected for neoteny and thus baby faces.”

Indeed, Increased social competition leads to more within-tribe cohesion which requires social cooperation this subsequently selects for neuroplasticity via globularity. Increased social competition implies less direct ecological pressures. Not to say the environment was not a strong selection force but at that point(100,000 years ago) Hominins with similar brain sizes were more of a threat. By homo erectus we were already apex predators. This extra free time(when we’re not at war) allowed for more individual variation to prosper which is when we see social innovations, like dance, music, and art.

” Add to that the Toba supereruption which killed off virtually all of our food 75 ka and the ecological pressures to get smarter would have been extreme.”

Yeah, about that…..

http://johnhawks.net/weblog/reviews/climate/toba-bottleneck-didnt-happen-2018.html

Good point, but I’m not sure if speed would be as useful to novel problems. Working memory contributes to how we solve novel problems but I’m unsure of how powerful the correlation is to variation between the efficiency of glutamate distribution throughout the nervous system and processing speed.

Well what we call lateral thinking seems all about our ability to connect seemingly unrelated ideas. It’s tempting to think this relates to the ability to connect very unrelated neurons. This is all very speculative though. There’s been lots of research on brain size, much less on brain shape.

Well technology is not always the best indicator of intelligence. Most HBDers would assume that there is dysgenic trends in chronometric g(Though i’m not sure if I advocate this), despite the fact that our innovation rate is exponential compared to ancient humans.

Each generation stands on the shoulders of the one before them, so even if the current generation were shorter (metaphorically speaking) than the one before it, it would stand taller. However prior to round shaped brains, it seems like humans didn’t have much ability to stand on the shoulders of their ancestors so each generation stood only slightly taller than the one before it and you had near-stasis for well over a million years.

Also, I’m more talking about the variation between and within tool-sets not necessarily the increased complexity.

There was some progress but it was more like 1% improvement every 100,000 years, whereas by the Upper Paleolithic, it was more like 1000% progress every 1000 years. Such a huge acceleration in cultural evolution might imply a leap in biological evolution especially since it’s paralleled by a brain shape change. Of course correlation does not prove causation, but it is suggestive.

Pretty sure that’s been refuted. Even before fire, we were able to eat rotten(but healthy) foo.

Because we had bigger jaws and more prognathous faces. Once we had the intelligence to cook our meat and cut it with increasingly better tools, that started to go away.

“maybe selection for neuroplasticity selected for neoteny and thus baby faces.”

Indeed, Increased social competition leads to more within-tribe cohesion which requires social cooperation this subsequently selects for neuroplasticity via globularity. Increased social competition implies less direct ecological pressures. Not to say the environment was not a strong selection force but at that point(100,000 years ago) Hominins with similar brain sizes were more of a threat. By homo erectus we were already apex predators. This extra free time(when we’re not at war) allowed for more individual variation to prosper which is when we see social innovations, like dance, music, and art.

Though humans only developed the ability to drive large animals to extinction about 50 ka (around the time of behavioral modernity)

And thanks for the Toba bottleneck link. I’m surprised to learn it’s been debunked because it supposedly explained why all modern humans are roughly 99.9% identical genetically and why even the most distantly related races are more similar to each other than two different chimps in the same troop. Perhaps that’s been debunked too?

”Working memory contributes to how we solve novel problems”

I thought working memory is good to solve familiar or convergent problems because we are accessing our internalized learnings to be applied in specific demand.

”Well technology is not always the best indicator of intelligence. Most HBDers would assume that there is dysgenic trends in chronometric g(Though i’m not sure if I advocate this), despite the fact that our innovation rate is exponential compared to ancient humans.”

I think they are comparing today with ”victorian times” [XIX and early XX] and not with ancient humans.

“However prior to round shaped brains,”

I don’t think it’s specifically round brains that causes behavioral modernity. Round brains are just an efficient way for evolution to cut corners when selecting for intelligence.

“Such a huge acceleration in cultural evolution might imply a leap in biological evolution especially since it’s paralleled by a brain shape change. Of course correlation does not prove causation, but it is suggestive.”

The biological change begins a little over 100,000 years ago. The cutural change is also not that dramatic, but instead a gradual increase in specialization of intra/inter-tool kit variation. What’s actually impressive during this time is the amount of unnecessary effort we see homo sapiens put into their projects. neanderthal got along fine through multiple Ice ages, and they were behaviorally modern, yet they don’t even hold a candle to the amount of detail Homo sapiens prioritized. Time is an ecological constraint.

“Because we had bigger jaws and more prognathous faces. Once we had the intelligence to cook our meat and cut it with increasingly better tools, that started to go away.”

Yes our jaws have progressively gotten smaller, but there was a more dramatic change in a relatively short period of time that occurs 130,000 years ago and parallels Increases in globularity.

“Though humans only developed the ability to drive large animals to extinction about 50 ka (around the time of behavioral modernity)”

They had that ability before the upper paleolithic. It’s far easier to cause extinctions when the animals are not familiar with you as a threat. It’s also easier if there are 2 apex predators hunting the same type of game.

“Perhaps that’s been debunked too?”

I had heard a long time ago that our homogeneity was over-exaggerated, but I don’t remember the link.

I don’t think it’s specifically round brains that causes behavioral modernity. Round brains are just an efficient way for evolution to cut corners when selecting for intelligence.

Not sure what part you’re disagreeing with. If round brains (largely) cause intelligence and if intelligence (largely) causes behavioral modernity, then round brains (largely) caused behavioral modernity (by causing intelligence). Natural selection for intelligence may have caused round brains.

The biological change begins a little over 100,000 years ago. The cutural change is also not that dramatic, but instead a gradual increase in specialization of intra/inter-tool kit variation. What’s actually impressive during this time is the amount of unnecessary effort we see homo sapiens put into their projects. neanderthal got along fine through multiple Ice ages, and they were behaviorally modern, yet they don’t even hold a candle to the amount of detail Homo sapiens prioritized. Time is an ecological constraint.

Were neanderthals behaviorally modern? Cultural change is hard to quantify, but our collective adaptability certainly increased because for 250,000 years our species was confined to just one continent, and then in just the last 50,000 years, we conquered five more continents. So the speed by which we colonized continents jumped from one per 250,000 years to one per 10,000 years. That’s a 25-fold increase! This implies a major change, either in our genotype, our culture, or the earth.

Yes our jaws have progressively gotten smaller, but there was a more dramatic change in a relatively short period of time that occurs 130,000 years ago and parallels Increases in globularity.

Citation?

“Though humans only developed the ability to drive large animals to extinction about 50 ka (around the time of behavioral modernity)”

They had that ability before the upper paleolithic. It’s far easier to cause extinctions when the animals are not familiar with you as a threat.

But we apparently didn’t have the ability to come in contact with mammals unfamiliar with us before 50 ka because we were confined to Africa.

Thanks for the reply but can’t find its location. Anyway, you asked

“Not sure what part you’re disagreeing with.”

And it was that an important factor was left out of your analysis. In large brains, under low selective pressure for intelligence, selective pressure for a shape that minimised concussion will dominate. In small brains this factor is absent. Here heavy modeling is required, but the naive response would be to look at the shape of smaller brains were higher intelligence is the only driving force. Thus our default assumption should be that a large brain, now susceptible to shear forces, that still maintains the shape of smaller ancestral brains is likely to have the greater selective pressure to higher intelligence.

“Natural selection for intelligence may have caused round brains.”

I guess we don’t disagree then

“Were neanderthals behaviorally modern?”

I believe so. they had more or less the same suite of behavioral traits as us. We were just better at it.

“Citation?”

It was in the last study you cited in your post:

Although both steps include parietal and cerebellar bulging, it is interesting to note that the first step (from geologic age group 1 to geologic age group 2) includes only subtle shape changes of brain regions associated with the anterior and middle cranial fossae. Because this part of the cranial base acts as the interface between the brain and the face, this suggests that the initial globularization was driven by changes in brain organization and not by changes in facial size and shape. The second step of globularization (from geologic age group 2 to 3) also comprises shape changes in these regions, suggesting that integration of the endocranium with the face might have contributed to endocranial globularization (14) but only in later phases of H. sapiens evolution.

“This implies a major change, either in our genotype, our culture, or the earth. But we apparently didn’t have the ability to come in contact with mammals unfamiliar with us before 50 ka because we were confined to Africa.”

That’s not completely true. Homo erectus made it to other continents and was arguably the most successful species of homo. I’m not sure if Erectus would have had the ability to cause mass exitinctions(though they could have been an invasive species). Of course we did better and faster the the second time around, we were smarter than before. Over time selection pressures shaping our brain became more and more socially themed, until encephalization stopped in recent times( as social pressures can actually select against brain size). We probably hit that sweet spot of most efficient(or fast) growth a lil bit after homo erectus, and then hit it’s peak around cro magnon. I suggested the cultural brian hypothesis to you, it gives a powerful explanation as to why the smartest(resource collecting sense) Animals also tend to be social, both pressure are required for an auto-catalytic take off in encephalization as each pressure by itself is inefficient.

This post doesn’t look too serious to me, firstly because it attempts to extend the human IQ scale through ratio IQ, which I suspect Pumpkin Person doesn’t believe are useful anyway, then (perhaps as a consequence of the first inaccuracy) it matches brain size to IQ, not log brain size. For humans, this simplification makes sense as it makes practically no difference over our normal ranges. By way of example, allow me use reductio adsurdum to help explain why this can’t be used for such extreme cases.

Imagine the main subject of my website (the sperm whale) was an aquatic primate. It has a brain volume averaging about 7.8 kg. By your method this would make it 70 SD larger for the purposes of IQ calculation, whereas it actually only has an advantage of log(7,800/1418) / log(1509/1418) = 27.4 SD. Likewise, if a chimp were human, the calculation for its expected intelligence should be -20.3 SD brain size, an expected intelligence difference of -98 IQ points from the western human average, giving an expected IQ of -1 on the human scale.

This post doesn’t look too serious to me, firstly because it attempts to extend the human IQ scale through ratio IQ, which I suspect Pumpkin Person doesn’t believe are useful anyway,

No, I dismissed ratio IQs as not linear enough, and then estimated human toddler (and by extension ape) IQ from digit span, a true interval scale.

Imagine the main subject of my website (the sperm whale) was an aquatic primate. It has a brain volume averaging about 7.8 kg.

Sperm whales are wildly bigger than hominoids, so you’d want to adjust their brain size for body size before applying the regression. No need for logs.

You were not convincing in regard to your antagonism towards logarithms. A far more appropriate argument would be to take the the largest and smallest human brains recorded from pathology free subjects in a survey of your choice and see if their geometric mean or arithmetic mean is closer to your value. The largest human brain I could find was from the Guinness book of records. It is 2.3kg but I am unsure if it is in this category, and smallest at 950g. Anyway, I have not made too much effort as I’m sure you already know the answer. Human body weight is certainly geometrically distributed, as is virtually any human metric, even esoteric things such as household wealth. It would be odd indeed if brain size proved an exception.

I much appreciated your reply, and would be very interested if you could show this was a rare exception. Actually so interested, that it would start me modeling as to how this exception (if it exists) could be.

Height and weight deviate from the Gaussian curve at the extremes largely because of pathological conditions like pituitary problems or edema.

Sure, that’s a significant point to contend with, but in real live most factors multiply out, such as variations in average nerve size, in average nerve volume, and nerve glial ratio. Actually, its very hard to think of effects that aren’t multiplicative. In studies of animal intelligence were ranges are not so restricted log brain weight is always used, and seldom any justification given (or in my mind, needed). Actually if you read the text you will find the use is so obvious to the authors that they don’t even bother to mention it (ie, when ‘correlation with brain size’ is written, ‘correlation with log brain size’ is meant. It’s the opposite that needs to be justified.

Above I meant to write ‘neuron count, nerve volume, and glial ratio’ as multiple factors. I should have also mentioned that if you use a pan-primate intelligence metric that scales across that vast range (almost 3 orders of magnitude) with log brain weight, you find a similar change in the intelligence among species within a similar class of ability elicits similar change in the proportion of success among them on pass/fail tests. Thus if 65% of a species pass that test, then about 35% might pass with a pan-species intelligence half a deviation lower irrespective of what point you are on the scale.

On a completely different issue, and one I am in great doubt over relates to your opening sentence.

“Today all human populations have the genetic potential to average IQs in the 85 to 105 range, with a species mean of say 97 (Euro norms).”

And the data I’ve seen nags at me as to whether such statements might gloss over an unusual possibility.

Perhaps naively, I had expected that if you give someone the perfectly average genome for a given race, their intelligence would reflect that average and your first sentence seems to (inadvertently) infer. Then I found that IQ seems to be the most powerful predictor of longevity. It’s as if human IQ is less determined by good genes than it is by how many deleterious genes you are unlucky enough to be handed. If so that population ‘average’ genome should be way above that “85-105 range”.

You may object that if this was the case women, who have a less buffered genome that men would be the smarter by a significant margin. By my pencil-and-paper calculation, on adjusting for brain size they are and, of cause, they have the higher longevity. Have you any thoughts on this?

On yet another issue, your entire brain shape argument has a massive flaw. For small brains, maximizing intelligence should prove the main driver for evolution, but large brains have massive issues with being susceptible to concussion. This is due to the properties of brain tissue where standing waves become quite some problem. I would be greatly surprised if that isn’t the main selective pressure on brain shape above a threshold size.

So I would say that the rationality is the property of cognitive systems of making good inferences (deductive and inductive) about the world. And intelligence is how well it works.

Like the vision can be measured by different properties (acuity, number of colours) that show how well it works. In practical life, it won’t be that much important to have 100 millions colours and shades instead of 1M or 100k. Same for extreme acuity (except for some jobs or sports).

So there sure will be a correlation among intelligence and success because it’s an advantage to make the best inferences about the world. People will be very demanding for practical inferences (knowing if the work starts at 7am or 8am, how much change you should get when you have paid an item etc) and most, except if it’s their job, won’t care at all about how the visual system works or their micro-waves.

If your cognitive system works xK Times better than others, you sure would be annoyed by many propositions and reasoning hold around you. It doesn’t mean people aren’t rational. We are all rational (except for some mental pathology)

There is also a non naturalistic meaning of rationality wich is the ability to justify the beliefs and actions one have (or at least their propositional or factual context). That’s probably why many people believes that one become intelligent with a formal education because learning how to do that is one major aspect of it.

There must be a link between the two, but its probably quite indirect. How good are your reasons is certainly correlated with intelligence but its probably not intelligence. More of a scholastic mind.

Would practicing the symbol search subtest carry over to a brand new symbol search subtest too, like digit symbol coding (well, it only transfers half your practice effect most likely), or would there be zero transfer since is visual scanning, and different items and arrangements might not catch you the same way as doing it multiple times.

Also, is it true that Neanderthals had better spatial awareness than modern humans, but a lot less verbal ability than humans?

My guess is digit symbol would show slightly more practice effect than symbol search. My guess is Neanderthals were better at spatial than verbal

Peeps,

do u think rounder-headed people could have more iq. I am asking based on this article?

perhaps